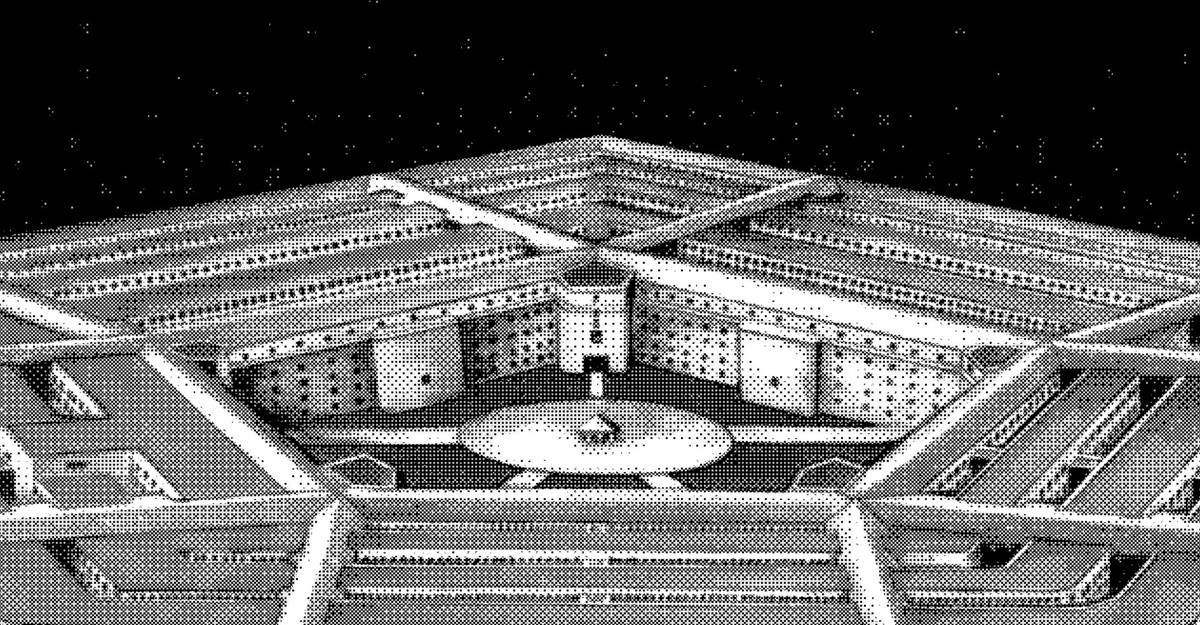

Anthropic Sues U.S. After Pentagon Labels It Supply-Chain Risk

Anthropic seeks to vacate the DOD's Feb. 27 'supply chain risk' label after refusing to allow its AI for mass surveillance or autonomous weapons; OpenAI and Google employees filed a supporting amicus brief.

Overview

Anthropic sued the U.S. government seeking to block the Department of Defense's Feb. 27 designation of the company as a "supply chain risk" and to halt related enforcement, court filings show.

The DOD labeled Anthropic a supply-chain risk after the company refused to allow its AI systems to be used for mass domestic surveillance or fully autonomous weapons, according to court filings and department statements.

Roughly 30 to 40 OpenAI and Google DeepMind employees filed an amicus brief supporting Anthropic, arguing contractual and technical safeguards are critical to prevent catastrophic misuse of AI, the brief says.

Anthropic said the designation has jeopardized hundreds of millions in contracts, including a lost $200 million Pentagon deal, and cited more than $14 billion in investments and an asserted $380 billion valuation, filings say.

Anthropic asks a federal court to declare the president's directive exceeds authority and to vacate the risk label, while the Pentagon has told vendors they must not let companies restrict lawful military use, filings and officials said.

Analysis

Center-leaning sources frame the dispute as a legal and political clash between a leading AI firm and the administration, emphasizing procedural overreach and novel national-security claims. They foreground Anthropic’s “unlawful campaign of retaliation” and the Pentagon’s unprecedented “supply-chain risk” label, juxtaposing company legal arguments with blunt White House rhetoric to highlight conflict.

FAQ

Anthropic refused to allow its AI systems to be used for mass domestic surveillance or fully autonomous weapons.

The designation has jeopardized hundreds of millions in contracts, including a lost $200 million Pentagon deal.

Roughly 30 to 40 OpenAI and Google DeepMind employees filed a supporting amicus brief, arguing that contractual and technical safeguards are critical to prevent catastrophic misuse of AI.

Anthropic sued in the U.S. District Court for the District of California alleging First Amendment violations and overreach under 10 U.S.C. 3252, and filed a second lawsuit in the D.C. Circuit of Appeals.

The designation applies narrowly only to the use of Claude as a direct part of contracts with the Department of War, not to all uses or unrelated business relationships.