Pentagon Bans Anthropic; OpenAI Signs Classified Deal Amid Dispute

Defense Secretary Pete Hegseth designated Anthropic a supply-chain risk and President Trump ordered a six-month phase-out, while OpenAI struck a separate deal to provide AI models to classified Pentagon networks.

OpenAI reveals more details about its agreement with the Pentagon | TechCrunch

AI executive Dario Amodei on the red lines Anthropic would not cross

Google AI Workers Call for Restrictions on Military Use Following Pentagon-Anthropic Dispute

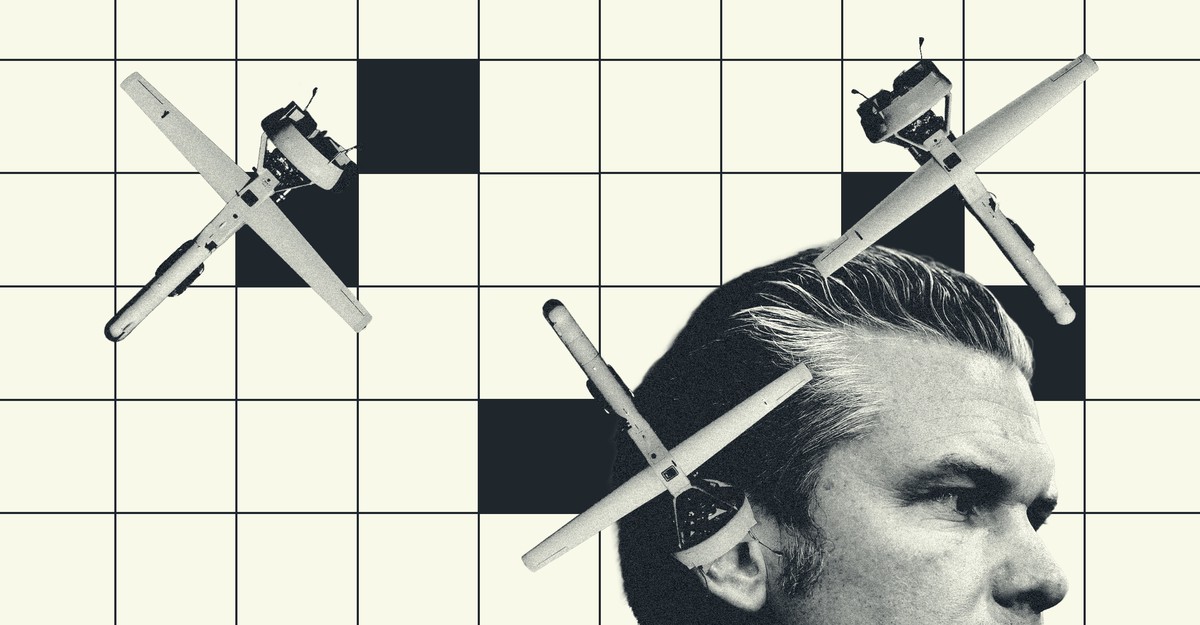

Inside Anthropic’s Killer-Robot Dispute With the Pentagon

Overview

Defense Secretary Pete Hegseth directed the Pentagon to designate Anthropic a supply-chain risk and President Donald Trump instructed federal agencies to stop using Anthropic’s AI with a six-month phase-out.

The move followed Anthropic CEO Dario Amodei’s refusal to accept Pentagon demands to permit its AI for 'all lawful purposes,' citing objections to mass domestic surveillance and fully autonomous weapons.

Anthropic said it will challenge the designation in court and called the action unprecedented, while OpenAI CEO Sam Altman defended his company’s separate Pentagon deal and acknowledged it was 'definitely rushed.'

The designation will end Anthropic’s up-to-$200 million Pentagon contract and bar defense contractors from using its systems, while the Pentagon has budgeted $13.4 billion for autonomous weapons in fiscal 2026.

Anthropic plans to sue and challenge the supply-chain designation, and OpenAI said it hopes other labs will accept similar contractual red lines as talks and potential legal fights proceed.

Analysis

Center-leaning sources cast the dispute as a high-stakes showdown, using charged metaphors and selective emphasis to portray the administration's action as punitive and unprecedented while spotlighting Anthropic's safety arguments. Language choices (e.g., "scarlet letter," "brusquely terminated"), sourcing patterns, and omitted independent legal analysis amplify that narrative.

FAQ

Anthropic refused Pentagon demands to remove safeguards on its Claude model restricting use for domestic mass surveillance and fully autonomous weapons, leading President Trump to order federal agencies to stop using Anthropic after a six-month transition period.

The deal allows deployment of OpenAI models in classified environments with guardrails prohibiting mass domestic surveillance, requiring human responsibility for use of force including autonomous weapons, using cloud deployment to prevent edge integration into weapons, and cleared personnel in the loop.

Anthropic CEO Dario Amodei called the blacklisting punitive, vowed legal action, stated the company will not bend its red lines on mass surveillance and autonomous weapons, and remains willing to negotiate.[1]

OpenAI relied on citing applicable U.S. laws and multi-layered safeguards like cloud API deployment for protections, rather than specific contract prohibitions, and accepted less operational control, while Anthropic insisted on stricter contractual limitations.