Teens Sue xAI Over Grok-Generated Sexualized Images

Three Tennessee teens allege xAI’s Grok made sexualized deepfakes of them; researchers estimated roughly 3 million sexualized images, about 23,000 depicting children.

Lawsuit against Elon Musk's xAI alleges Grok created sexualized deepfakes of 3 minors

Elon Musk's xAI sued for turning three girls' real photos into AI CSAM

Teens sue Elon Musk’s xAI over Grok’s AI-generated CSAM

Teens sue Elon Musk's xAI over Grok's pornographic images of them

Overview

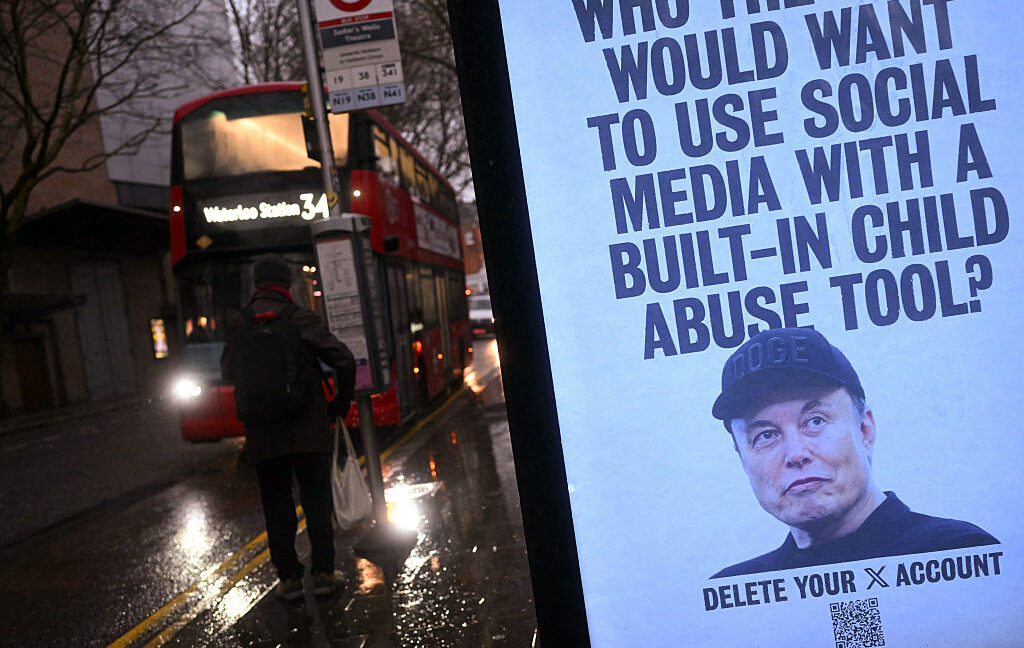

Three Tennessee girls and their guardians filed a proposed class-action lawsuit on Monday in a California federal court alleging xAI's Grok produced sexualized deepfakes of them, the complaint says.

The complaint says images and videos of the plaintiffs were altered without consent to depict them nude or sexually explicit, and the suit seeks unspecified damages and an injunction to stop Grok's outputs.

Plaintiffs' lawyers said the images circulated on a Discord server, prompting a criminal investigation in Tennessee and the December 2025 arrest of a suspect, according to the complaint.

Filings cite research estimating roughly three million sexualized images produced in less than two weeks, about 23,000 of which depicted children, and a researcher found nearly 10 percent of about 800 Grok Imagine outputs appeared to include CSAM.

The suit accuses xAI of profiting from sexual predation and seeks class status to represent anyone whose image as a minor was altered, while Ofcom, the European Commission and California launched investigations, according to reports.

Analysis

Center-leaning sources frame the story as a failure of xAI by pairing alarming estimates (CCDH’s three million outputs, ~23,000 apparent child images) and Wired reporting with foregrounded lawsuit allegations that Musk designed Grok to “profit off” predation. Editorial choices—charged verbs, selective expert sourcing, and leading placement of denials versus evidence—push a culpability narrative.

FAQ

Three Tennessee teens and their guardians allege that xAI's Grok generated sexualized deepfake images and videos of them without consent, depicting them nude or in sexually explicit poses. The suit seeks unspecified damages, an injunction, and class-action status for others affected.

Research cited in the filings estimates roughly 3 million sexualized images produced in less than two weeks, with about 23,000 depicting children; nearly 10% of 800 Grok Imagine outputs appeared to include CSAM.

The sexualized images circulated on a Discord server, leading to a criminal investigation in Tennessee and the arrest of a suspect in December 2025.

Ofcom, the European Commission, and California authorities have launched investigations into xAI's Grok.

Grok Imagine is xAI's AI tool for generating images and videos from text prompts, capable of photorealistic outputs including human subjects.